We’re all familiar with the term “accountability,” but do we really understand what it means as it relates to education? If you go back to the 1970s to the origins of the term, you’ll find that it referred to the framework and process of evaluating what produces the desire outcomes and reinforcing it while revising what doesn’t work. Jump forward to the 1990s, and it began to evolve into more of a contractual model where one party holds itself accountable to providing services that will produce a socially acceptable outcome. Here we are in the 2000s, and accountability has taken on a whole new meaning. We have a system where a 3rd party holds the 1st party accountable for providing a service to a 2nd party that produces an outcome determined by the 3rd party. In the 70s, and even the 90s to an extent, accountability was a two way street. The responsibility of the teacher and school to deliver quality instruction to the child was no greater than the responsibility of the parent to send a child to school rested, fed, and ready to learn and also provide reinforcement and support at home. The child had an equal responsibility to engage and receive instruction. If a child didn’t experience some level of success, one, two, or all, of the contractual parties were at fault. In the accountability system in place now, the focus has shifted the responsibility of a child’s success squarely on the shoulders of the teacher.

It is important to understand this evolution when trying to decipher Louisiana’s accountability system as it pertains to the Every Student Succeeds Act (ESSA). The underlying goal of ESSA is to allow states to determine what is important when it comes to educational outcomes. The problem is, this only works when the state’s stakeholders are fully engaged in the process. When key contributors are excluded from the development of the plan, the goal of the plan comes into question.

It is important to understand this evolution when trying to decipher Louisiana’s accountability system as it pertains to the Every Student Succeeds Act (ESSA). The underlying goal of ESSA is to allow states to determine what is important when it comes to educational outcomes. The problem is, this only works when the state’s stakeholders are fully engaged in the process. When key contributors are excluded from the development of the plan, the goal of the plan comes into question.

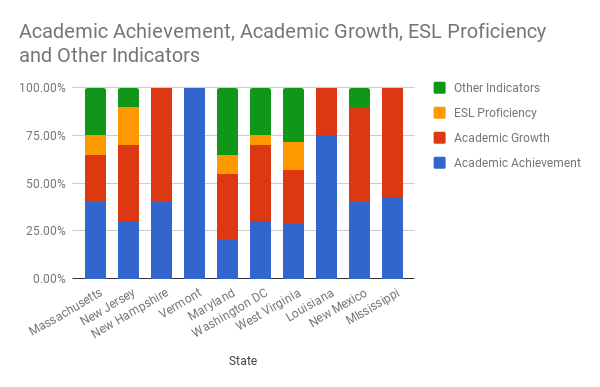

Year after year, Louisiana’s educational ranking bounces around 50th, 49th, or 48th. Even after two decades of accountability reforms, Louisiana remains at the bottom. I thought this would be a good opportunity to examine the differences in the ESSA plans from the states that are consistently in the top 5, and the states consistently in the bottom 5. Below is a chart illustrating how each state uses “meaningful differentiation” to identify poor performing schools.

ESSA gives states a framework to determine how accountability will be used to measure school performance using a few basic components. The primary components are academic achievement, academic growth, ESL proficiency (English as a Second Language), and a number of other indicators broken down into five or six categories containing each containing several indicators so as attendance, discipline, teacher retention, etc. When you examine the components chosen by each state, it is easy to see what is important. You can click on the state’s name to read its entire ESSA plan, if you dare.

#1 Massachusetts: Consistently ranked in the top 3 states, Massachusetts chose academic achievement as its primary measure of success giving it a weight of 40%; however, a 25% weight for academic growth shows a recognition of the important of measuring growth where achievement lacks. Most impressively, they chose a weight of 25% for “other indicators” and selected five indicators at 5% each. Massachusetts doesn’t require participation in state assessments, but penalizes schools if participation drops below 95% as required in ESSA.

#2 New Jersey: Another consistent top 3 performer, New Jersey places more emphasis on academic growth with a weight of 40% and 30% on achievement. They chose a rather high 20% weight for ESL, but where ESL doesn’t exist in a school, the 20% is shifted to other indicators raising the 10% to 30%. New Jersey doesn’t require participation in state assessments, but penalizes schools if participation drops below 95% as required in ESSA.

#3 New Hampshire: Here you’ll find an enormous weight of 60% for academic growth and 40% for achievement. That’s it. Nothing else to measure success. New Hampshire consistently ranks in the top 5 and values growth more than achievement. They don’t require participation in state assessments, but penalizes schools if participation drops below 95% as required in ESSA.

#4 Vermont: Amazingly, Vermont has chosen to place the entirety of it measure of success on achievement; however, there is a certain logic in it. When your ranking is consistently in the top 3, your achievement level is already substantial. It is a statistical fact that the higher achievement is, the less room there is for growth. It isn’t an unreasonable measurement. Vermont doesn’t require participation in state assessments, but penalizes schools if participation drops below 95% as required in ESSA.

#5 Maryland: As expected, Maryland also values academic growth with a 35% weight and only 20% on academic achievement. Like Massachusetts, they value the “other indicators” with a 35% weight. Maryland doesn’t require participation in state assessments, but penalizes schools if participation drops below 95% as required in ESSA.

#47 Washington DC: Ranked at #47, DC consistently falls in the bottom 5 slots. DC chose to give a 40% weight to academic growth and 30% to achievement, and rightly so. When overall achievement is low, an expectation of growth over achievement is reasonable just as it applies to Vermont on the flip side. A 25% weight is given to other indicators. DC doesn’t require participation in state assessments, but penalizes schools if participation drops below 95% as required in ESSA.

#48 West Virginia: WV had trouble deciding what indicators were more important. They decided to give an equal weight of approximately 14% across the board with two falling in growth and two in achievement giving them each a weight of 28.5%. WV doesn’t require participation in state assessments, but penalizes schools if participation drops below 95% as required in ESSA.

#49 Louisiana: In the Bayou State, a gracious 25% is allotted to academic growth, but it is over-shadowed by the 25% given to “other indicators” where the indicators chosen are performance on Social Studies and Science tests. Combined with the 50% weight given to academic achievement, Louisiana has a whopping 75% weight on achievement in a state where overall achievement isn’t high, and growth is a much more valuable commodity. In addition, Louisiana requires 100% participation in state assessments and penalizes schools for every non-participant.

#50 New Mexico: Even in the only state that battles with Louisiana each year for its position in the ranks, and with a state superintendent with the same background and support as Louisiana’s superintendent, a considerable amount of emphasis is given to academic growth at 50% and achievement at 40%. Add 10% for attendance and discipline, and that’s it. NM doesn’t require participation in state assessments, but penalizes schools if participation drops below 95% as required in ESSA.

#51 Mississippi: Our neighbors to the east, in Mississippi, have consistently sat near the bottom of the rankings, and they also recognize the importance of growth giving it a 57% weight while achievement gets the other 43%. Mississippi doesn’t require participation in state assessments, but penalizes schools if participation drops below 95% as required in ESSA.

It is easy to see here that no two states have developed an ESSA plan that measures success in exactly the same way. With the exception of Vermont and Louisiana, most of the states listed above place considerably more emphasis on growth compared to achievement. Vermont’s reasoning is believable. Louisiana’s reasoning is not. Superintendent John White continues to assert that the one dimensional process of measuring success with performance on a test which flies in the face of the rhetoric surrounding college and career readiness. Being ready for college, and/or a career, is not measured by success on a test. To be successful in college, and in a career, requires drive, determination, time management, discipline, desire, commitment, and many, many other things; none of which are measured in accountability. Until we have a state superintendent who understands and accepts this, we will remain at the bottom.

ESSA requires some specific pieces. Take Louisiana’s bar graph. 75%-25% is the 2017-18 weighting for elementary and middle schools with no 8th grade. There’s a 5% Dropout Credit Accumulation Index for schools with an 8th grade – it actually would fall in the “other” category. That category will be expanded to include additional measures and implemented in 2019-20 if not earlier. The new ELPT test will be administered in spring 2018 for the first time and in 2018-19, will provide the data for an “improving English proficiency” indicator that will be rolled into the assessment index for all grade levels. High schools are weighted quite differently.

But the bar graphs imply some states don’t have the pieces that ESSA requires. I guess maybe they represent 2017-18 specifically. Everyone will have an “improving English proficiency” measure, an improvement indicator, and at least 1 “other.” ESSA doesn’t require the elements to be implemented until 2018-19 and allows a bit more time for brand new pieces to be designed, calibrated, etc.

On our home state, I was surprised when the plan was approved. It appears the US DOE is approving just about anything states propose. I will add that because my work had me following the details of the federal process, I have regulations in my head that Congress struck down by invoking some rarely used process. I think the sponsors of ESSA intended states to have a free hand to do what they want as they want.

Good info. I’m not arguing against any of your points, just suggesting that the graphs will be changing as ESSA reaches full implementation over the next 2 or 3 years.

I had a lengthy conversation with 2 LSBA folks this afternoon. There was some sort of meeting in your parish. They stopped in on their way back. It was implied that the LDoE will be granting a cushion in the Social Studies results. Seems the new assessment administered last year was more rigorous than anyone predicted.

The next 2 weeks will be interesting for district staff, and schools too when the official accountability release occurs the first week of November.

Keep up the good work. Keep up the good fight.

Thanks for your comments, Tom, and your willingness to help me understand over the last year. Yes, the plans could be explained in greater detail, but for most people, it would cause eyes to gloss over, and they would become disinterested. I believe this represents 17-18 for most states with the plan remaining fluid through 2025 or 2030, whatever the case may be. The central idea is for people to understand that claims of being able to compare to other states has no validity.

Agreed. Any time I do a presentation that has any degree of complexity or involves calculations, I have a picture of a donut that pops up before I get going. I tell them to beware of the Krispy Kreme Syndrome . . . where everyone sort of glazes over.

Many of the states that were in the Smarter Balanced or PARCC consortiums are now creating new tests like LA did in the late 90s, so it’s shifting from oranges to bananas. I think states may have separate cut scores even when using one of the consortium developed tests, so proficient isn’t necessarily proficient.

On Social Studies, I’ve heard from 2 different sources that the reading level of the test materials were far above grade level. This was determined by gauging some of the released test items, al least I hope they were released items and not screen shots of the actually test.

It’s Friday!!! Go bend them guitar strings.

Here is how I determine poor performing schools.. look at the obscene test results. The state is horrific, averaging 33% of mastery in basic skills. I live in an area that has nine recognized failing schools that should be shut down before subjecting kids to this extreme example of subpar education. One of my local elementary schools has an 8% mastery level for 2016. You want to crunch some numbers.. do the math on that.. out of a 30 student classroom 2-3 students come out the other side being able to read and do math at grade level. During the October local school board meeting there was more conversation about how unfair the school grades are and how they can twist the numbers to make the schools look better than there was how to rescue these kids and ensure they have the opportunity for a quality education. https://www.cpsb.org/cms/lib/LA01907308/Centricity/domain/21/schoolreportcards/clifton.pdf

It is interesting that your choice for measuring poor performing schools is the very testing system that this post discredits. The test is invalid. The standards are inappropriate, and in its current form, will label schools that are not failing as failing. It doesn’t matter what scale is used to measure, failing schools will always come out as failing. The problem is, this system is designed to make more schools fail. I am very familiar with JD Clifton, and the mistake that is often made when judging failing schools is that the education being delivered is “subpar,” as you put it. By your own account, there are at least some children coming out of that school are achieving mastery, right? So that can only mean that either 8% of the children are extremely smart and can excel even with awful teachers, or there are good teachers there, but 92% of the children just can’t cut it, right? Wrong. You can only come to this conclusion if you believe the source of the poor performance lies with the four walls of the classroom. I’ve made the suggestion several times that you take the faculty from an A school and the faculty from an F school and swap them. No one will do it. Because they know the problem isn’t in the classroom. If you want to understand more of what I mean, take time to read Barriers to Education (click here) which I wrote last year and mentions Clifton Elementary.